Science

Stanford Researchers Decode Inner Speech with New BCI Technology

Scientists at Stanford University have developed a groundbreaking brain-computer interface (BCI) that decodes inner speech, presenting a significant advancement in communication technology for individuals with severe paralysis. Unlike traditional BCIs, which require users to physically attempt to speak, this innovative system interprets silent thoughts, enabling users to communicate without muscle engagement.

Most existing BCIs rely on recording signals from the brain regions responsible for muscle control, which require the user to make an effort to speak. This method can be exhausting for patients with conditions such as amyotrophic lateral sclerosis (ALS) or tetraplegia. Recognizing these limitations, researchers led by Benyamin Meschede Abramovich Krasa and Erin M. Kunz shifted focus to decoding inner speech—the silent dialogues we engage in during reading or reflection.

Innovative Approach to Decoding Inner Speech

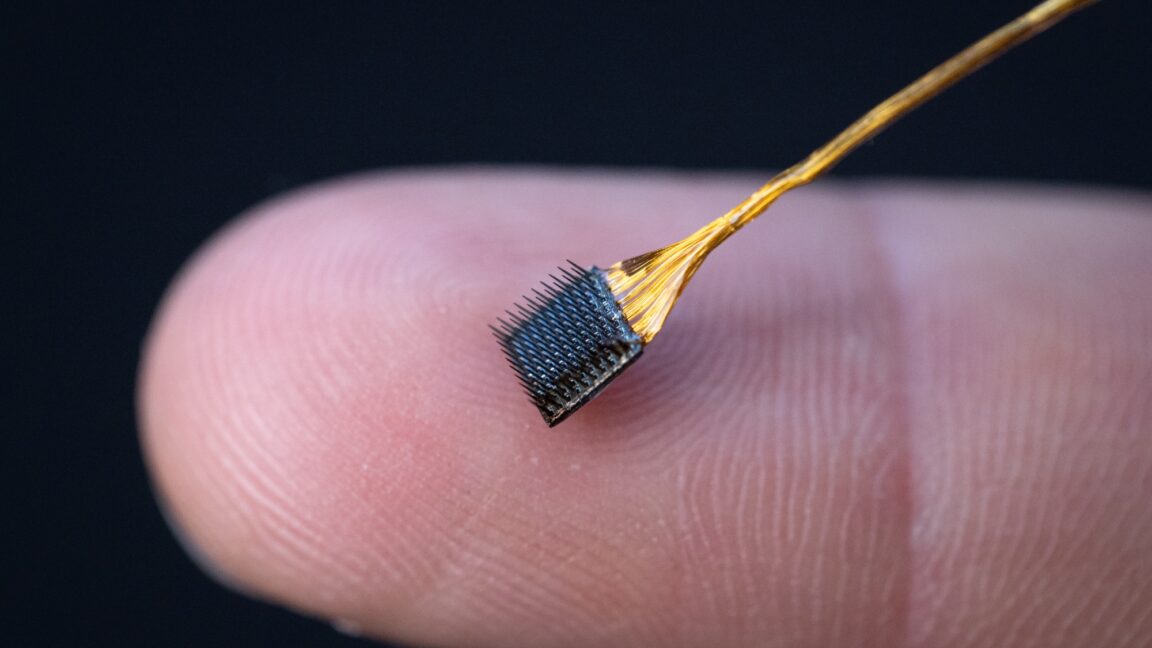

The research team initiated their project by gathering data to train artificial intelligence algorithms capable of translating neural signals associated with inner speech into coherent words. The study involved four participants, all of whom had microelectrode arrays implanted in various areas of the motor cortex. These patients performed tasks such as listening to recorded words and engaging in silent reading.

Their findings revealed that the same brain regions responsible for attempted speech also contained signals representing inner speech. This discovery raised important questions about the potential for traditional BCIs, designed for attempted speech, to inadvertently decode inner thoughts. Testing confirmed these concerns; the system could indeed activate upon participants imagining sentences displayed on a screen.

As Krasa noted, “We demonstrated that traditional BCI systems that were trained on attempted speech could be activated when a subject was looking at a sentence on the screen and imagined speaking that sentence in their head.”

Addressing Mental Privacy Concerns

The ability to extract words directly from thoughts naturally raises significant privacy concerns. While existing systems like the one developed at the University of California—Davis have avoided decoding inner speech, Krasa’s team anticipated the possibility and implemented safeguards to protect patient privacy.

Their first safeguard automatically detected subtle differences between the brain signals for inner and attempted speech. By training AI decoder networks to ignore inner speech signals labeled as silent, they achieved promising results. The second safeguard involved a “mental password” system, where participants imagined saying the phrase “Chitty chitty bang bang” to activate the prosthesis. This method demonstrated an impressive accuracy of 98 percent, although it struggled with more complex phrases.

With privacy measures in place, the team began testing the inner speech system using specific prompts. Patients imagined saying short sentences displayed on a screen, achieving up to 86 percent accuracy with a vocabulary limited to 50 words. However, this accuracy dropped to 74 percent when the vocabulary expanded to 125,000 words.

The limitations of the BCI became evident during tests of unstructured inner speech. In one task, participants were asked to memorize sequences of arrows displayed on a screen, but the system’s performance was only slightly above chance. Efforts to decode more complex thoughts led to results that Krasa described as “gibberish.”

Despite its current limitations, Krasa considers the inner speech neural prosthesis a proof of concept. “We didn’t think this would be possible, but we did it and that’s exciting! The error rates were too high, though, for someone to use it regularly,” he stated. Ongoing challenges include refining hardware and improving the precision with which brain signals are recorded.

The research team is now pursuing two promising avenues: one project investigates the speed advantage of an inner speech BCI compared to attempted speech alternatives, while the other explores the potential benefits for individuals with aphasia, a condition where patients can control their mouths but struggle to produce words.

As researchers continue to push the boundaries of BCI technology, the implications for communication, particularly for those with severe disabilities, remain significant. Future advancements may pave the way for more effective communication tools, shifting the landscape of assistive technology.

-

Science2 months ago

Science2 months agoToyoake City Proposes Daily Two-Hour Smartphone Use Limit

-

Health3 months ago

Health3 months agoB.C. Review Reveals Urgent Need for Rare-Disease Drug Reforms

-

Top Stories3 months ago

Top Stories3 months agoPedestrian Fatally Injured in Esquimalt Collision on August 14

-

Technology2 months ago

Technology2 months agoDark Adventure Game “Bye Sweet Carole” Set for October Release

-

World2 months ago

World2 months agoJimmy Lai’s Defense Challenges Charges Under National Security Law

-

Technology2 months ago

Technology2 months agoKonami Revives Iconic Metal Gear Solid Delta Ahead of Release

-

Technology2 months ago

Technology2 months agoSnapmaker U1 Color 3D Printer Redefines Speed and Sustainability

-

Technology2 months ago

Technology2 months agoAION Folding Knife: Redefining EDC Design with Premium Materials

-

Technology2 months ago

Technology2 months agoSolve Today’s Wordle Challenge: Hints and Answer for August 19

-

Business2 months ago

Business2 months agoGordon Murray Automotive Unveils S1 LM and Le Mans GTR at Monterey

-

Lifestyle2 months ago

Lifestyle2 months agoVictoria’s Pop-Up Shop Shines Light on B.C.’s Wolf Cull

-

Technology2 months ago

Technology2 months agoApple Expands Self-Service Repair Program to Canada